Parasuraman’s Automation Ladder

Clinical Practice can learn quite a bit from aviation

A bit different than our usual area as I wanted to bring in my learnings of integrating AI into my clinical practice, something that converges our two favorite topics. Clinical innovation and aviation. As a self-professed AvGeek Parasuraman’s Automation Ladder forms the cornerstone of aviation efficiency. In the decades leading up to their 2000 paper, Parasuraman, Sheridan, and Wickens were studying how pilots interacted with increasingly automated cockpits, and how automation failures or mode confusion contributed to accidents.

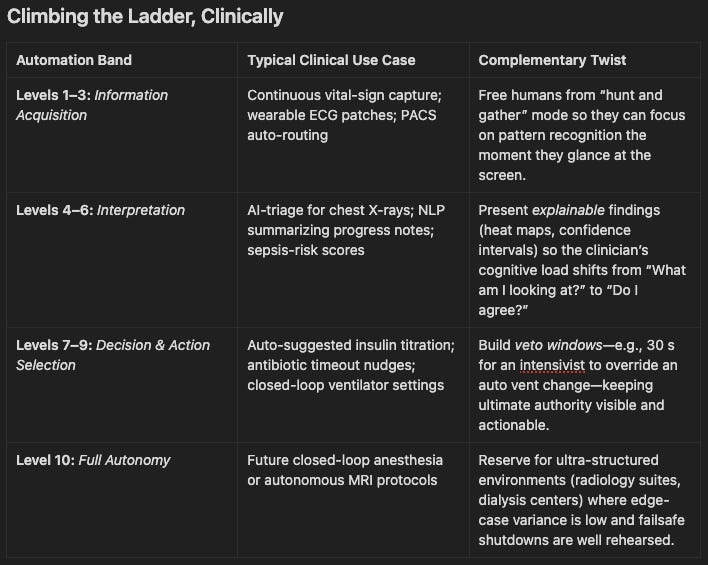

Along those lines, when clinicians talk about “AI in the loop,” we rarely stop to define how much of the loop we’re willing to hand over. Parasuraman, Sheridan, and Wickens’s 10-level automation scale, spanning raw data collection (Level 1) to full autonomy (Level 10), offers a vocabulary for that discussion.

Parasuraman’s “automation ladder” emerged from efforts in the late 1990s to bring rigor to how we think about human–automation interaction. Dr. Raja Parasuraman and colleagues recognized that simply labeling a system “automated” or “manual” missed the rich spectrum of ways automation can support, or undermine, human operators. Drawing on cognitive psychology and human factors engineering, they proposed a ten-level taxonomy (often depicted as a “ladder”) that ranges from Level 1 (the human does everything) to Level 10 (the automation does everything, and the human is out of the loop).

Rather than treating the ladder as a one-way ascent to robo-doctors, it can serve as a design dial: a way to tune AI so that it complements rather than competes with human expertise.

Why a 25-Year-Old Taxonomy Still Matters

Healthcare workflows are a mosaic of micro-tasks, glucose checks, image reads, dose calculations, discharge planning, each with its own risk tolerance, cognitive demand, and data density. Parasuraman’s hierarchy dissects each task into four functions (acquire, analyze, decide, act) and asks: Who does what? That question is central for safety regulators, malpractice carriers, and, most importantly, the clinicians expected to sign the chart.

Dynamic, Not Static

Clinicians rarely stay at one rung. During a code blue, you may want ventilator adjustments at Level 9 (machine acts unless stopped); during routine rounds, the same ventilator should drop to Level 5 (machine interprets, you decide).

Think of automation level as a context-aware variable, one that can be written into order sets, service-line policies, or even individual patient profiles.

Clinical example: Imagine tying the automation level to the patient’s SOFA score1 or surge capacity status. In a calm ICU, keep decision authority with humans; in a mass-casualty surge, let the system escalate to auto-titration with rapid-cycle auditing.

Building a Complementary Stack

Map micro-tasks to levels Break a service (e.g., hospital medicine) into its constituent tasks and assign a maximum allowable automation level to each.

Design for graceful degradation If the AI fails, can the human step in one level down without losing situational awareness?

Instrument feedback loops Record how often humans override Level 7–9 suggestions. High veto rates can uncover bias, brittle models, or workflow mismatch.

Educate to the ladder Again back to training, Residents should learn to ask, “What level is this tool?” just as they ask for a drug’s half-life.

Pitfalls to Watch

Automation bias & complacency

Level 6–9 tools can create a subtle trust trap (“AI is always right”); periodic “red-team” alerts keep clinicians re-engaged.

Skill decay

Will need strict attention in medical school and training. If junior staff never perform Level 1–3 monitoring, their clinical smell test atrophies. Rotate roles or run simulation labs.

Liability ambiguity

Malpractice law is still human-centric. Annotating each AI suggestion and human override builds an evidentiary trail. We will focus heavily on this as it doesn’t seem Healthtech companies are willing to take on liability at this point.

Alright lets get down to the fun stuff.

Clinical Vignettes That Make the Ladder Real

1. “Two-Speed” Chest-Pain Pathway in the ED

Levels 3 → 5 → 8 (dynamic)

A busy emergency department deploys an AI ECG-triage tool.

Arrival (Level 3) – The system collects and pre-filters 12-lead data, highlighting subtle ST-segment shifts the human eye might miss.

First Read (Level 5) – It runs a deep-learning model that labels the tracing “Possible high-lateral MI, 87 % confidence” and overlays a saliency map. The attending still signs off.

Crowd-Control Mode (Level 8) – When the ED dashboard shows >90 % capacity, the tool auto-orders a stat troponin and pages cardiology unless a physician cancels within five minutes.

Provocation: Would you accept algorithm-initiated troponins only when crowding threatens care delays? What evidence threshold would make you comfortable escalating to Level 9?

2. ICU Ventilator That Talks in “If–Then” Clauses

Levels 6 ↔︎ 9 (patient-specific toggling)

A closed-loop ventilator interprets waveforms (Level 6) and suggests PEEP/FiO₂ combos with a running “explainability ticker” (“Driving pressure trending up; recruitment maneuver recommended”).

During proning, the intensivist activates ‘Auto-Recruit’ (Level 9). The machine executes incremental PEEP ladders unless SpO₂ drops below a clinician-set floor, in which case it downgrades to Level 5 and waits for human input.

Provocation: Could we encode ceiling and floor physiologic triggers for every automated organ support device, essentially “smart circuit-breakers” that dial automation up or down in real time?

3. Oncology Chemo-Dosing Copilot

Levels 4 → 7

Genomic-guided dosing software ingests tumor sequencing and renal function, generating population PK curves (Level 4). It then simulates three dosing schedules, colour-coding toxicity probabilities (Level 7) for the oncologist to choose.

Provocation: If the historical override rate falls below 2 %, is it ethical (or wasteful) to keep the human chooser? At what audit interval should the model be retrained to prevent “silent drift” toward unsafe recommendations?

4. Post-Discharge Heart-Failure Bot

Levels 2 → 10 (graduated autonomy)

A smartphone app passively streams weight and impedance data (Level 2). Early in the program it merely graphs trends for the nurse coach. By 60 days, for low-risk patients who’ve demonstrated engagement, it upgrades itself to Level 10, sending same-day diuretic prescriptions to the pharmacy under a collaborative practice agreement, unless the patient’s frailty index flags newly high risk, which downgrades the bot back to Level 5.

Provocation: Should autonomy be earned by patient adherence and revoked by physiologic volatility, making the ladder a two-way street not just for machines but for patients?

5. Surgical “Black-Ice” Detector

Levels 1 + 9 in parallel

In a robotic laparoscopic suite, an optical sensor constantly scans instruments for micro-slippage due to invisible condensation (“black ice”). The software is allowed to transiently halt instrument motion (Level 9) while simultaneously alerting the surgeon’s HUD (Level 1) so they can override instantly.

Provocation: Is there a class of safety-critical reflexes we should always entrust to machines (like ABS brakes) while keeping humans fully aware, even if the reflex actuates faster than a human could react?

The Critical Clinician’s Call

Adopting Parasuraman’s ladder doesn’t mean racing to Level 10; it means choosing the right rung for the right moment. In practice, the most powerful AI systems will be those that flex, sliding up or down the ladder as patient acuity, data richness, and clinician bandwidth shift hour by hour.

As you evaluate the next algorithmic shiny object, whether an AI scribe or a predictive dosing engine, ask these three questions:

What level of automation is it really delivering?

Do I have the visibility to veto when it matters?

How will this level evolve across the patient’s journey?

Answer them well, and AI becomes less of a black-box intruder and more of a well-trained scrub tech, always in the room, handing you exactly the right instrument at exactly the right time.

I myself have been pondering some questions of my own in learning more about its implications:

When should the environment (crowding, surge, home vs hospital) dictate the level rather than the task itself?

Can autonomy be conditional on patient behaviour or model confidence rather than fixed at design time?

Where do we place the moral liability when a Level 9 action saves time in 98 % of cases but harms in 2 %?

1 Sequential Organ Failure Assessment (SOFA) score is a tool used in intensive care to quantify the degree of a patient’s organ dysfunction and to track changes over time. It’s most commonly applied in sepsis and critical‐illness management to help predict morbidity and mortality. SOFA assesses six organ systems, each scored from 0 (normal) to 4 (most severe dysfunction). The total score ranges from 0 to 24.