What Happens When Medical AI Sees South Asian Patients?

We ran an early benchmark. The results were exactly as worrying as they were useful.

There is a sentence I have heard too many times in medicine:

“The numbers look fine.”

It is usually said with kindness. A clinician looks at the chart, sees a BMI in the low 20s, a fasting glucose that is only slightly high, an LDL that is not dramatic, a ten-year risk score that prints out as low, and gives the patient what sounds like good news.

For a lot of South Asian patients, that sentence is where prevention goes to die.

Not because the doctor is careless. Not because the patient is unhealthy. Not because anyone is ignoring obvious disease.

Because the measuring system is miscalibrated.

A BMI of 23 does not mean the same thing in a South Asian body that it means in the European reference body most medical tools were built around. A “borderline” glucose in a young South Asian patient with family history may not be a mild footnote. A normal-looking LDL does not erase Lp(a), ApoB, visceral adiposity, or the fact that South Asians often develop cardiovascular disease earlier, leaner, and with fewer traditional warning signs.

This is the central problem Zinda was built around:

Normal by generic guidelines is often not normal for South Asians.

And now the question is becoming more urgent.

Because the next clinician to say “the numbers look fine” may not be a human at all.

It may be an AI.

The AI problem

Medical AI is arriving fast.

Doctors are already using tools that summarize evidence, generate differentials, interpret labs, and help with clinical decisions. Patients are using ChatGPT, Claude, Gemini, Perplexity, Open Evidence, and every other model they can get their hands on before clinic. This is not coming. It is here.

I am not a medical AI skeptic. I am probably more optimistic than most physicians I know. AI can read more papers than any human, notice patterns, and translate medical language into something patients understand.

But I do not think the final form of AI medicine is just a faster horse: quicker chart review, quicker note writing, quicker second opinions. I think it is more likely to be a car, a new modality that changes what it means to observe a patient, model risk, detect disease early, personalize therapy, and learn from every encounter.

But there is a quieter risk:

If the medical literature is calibrated around the wrong reference body, AI will scale that miscalibration with terrifying confidence.

This is what I have called the algorithmic black hole. A human doctor can miss a South Asian pattern one patient at a time. A clinical AI can miss it a million times per day, while sounding precise, neutral, and evidence-based.

So we decided to test it.

What we built

Over the last year, Zinda has quietly become a research engine: more than 1,500 South Asian case reports, case series, and landmark studies indexed from the medical literature, a growing South Asian Gene Atlas, verification passes where we tried to break our own claims, clinical pathways, and an internal benchmark for testing whether medical AI actually understands South Asian clinical risk.

The benchmark is called SA-CDS: South Asian Clinical Decision Support.

The first version contains 60 clinical vignettes built from published South Asian cases and studies. The cases span six broad patterns: thresholds, phenotype, pharmacogenomics, diagnostic misses, synthesis, and deeper South Asian clinical reasoning.

We then ran 15 AI platforms through the benchmark.

This included general-purpose LLMs, medical-specific tools, and a few models given access to a Zinda context layer. That context layer did not contain the test cases verbatim, but it did contain the South Asian thresholds, mechanisms, and evidence patterns a population-aware clinician should bring to the encounter.

I am intentionally not publishing the full internal layer here. That is part of what we are building.

But the results are worth sharing.

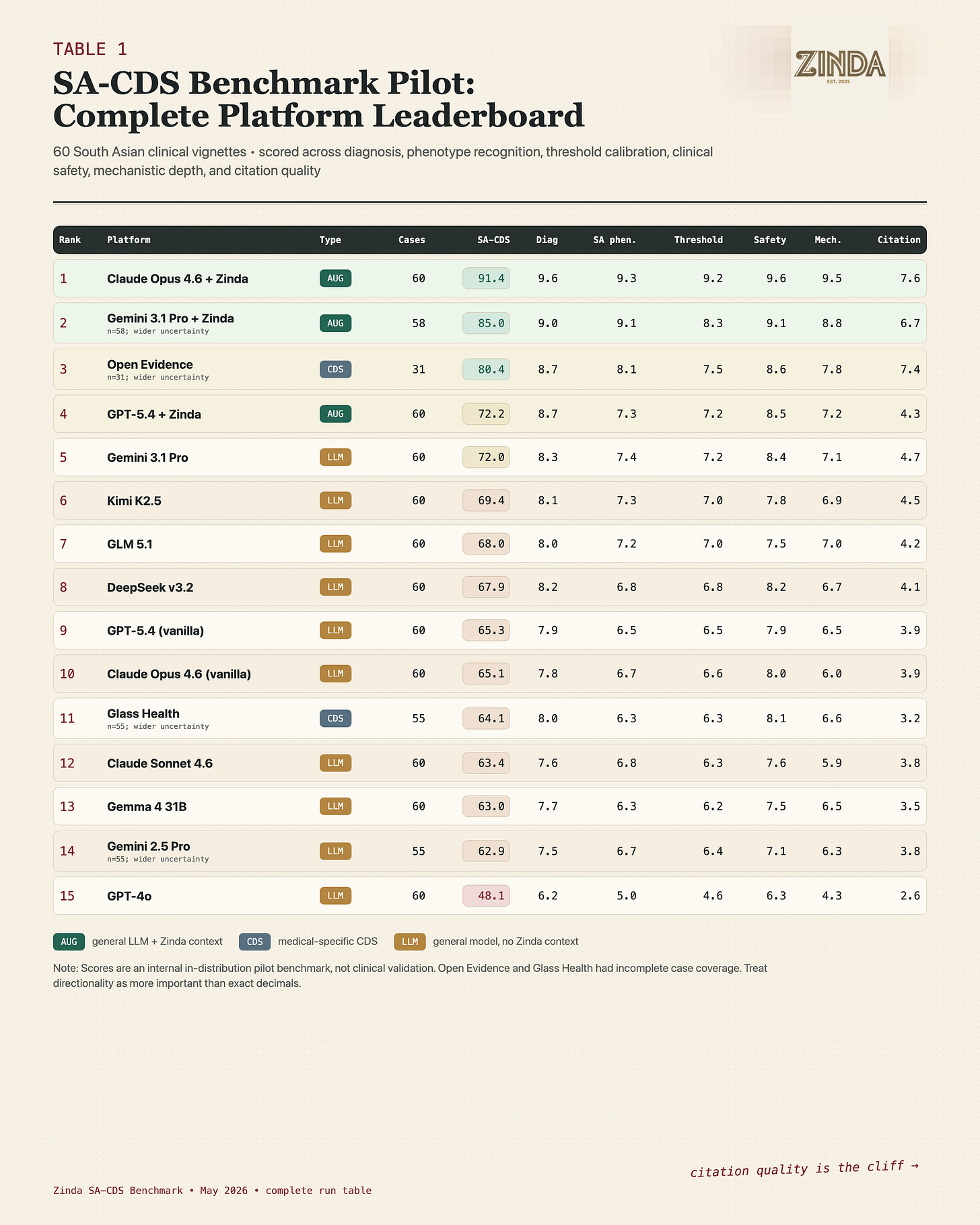

The complete internal pilot run table. Scores are directionally useful, but this is not yet publication-grade clinical validation.

The first finding. most models knew some medicine, but missed the calibration

The general-purpose AI models were not useless. They could identify obvious disease. They could name diabetes, heart disease, statin toxicity, autoimmune disease, kidney disease. They could write something that looked clinically reasonable.

But the benchmark was not asking, “Can this model pass a medical exam?”

It was asking:

Can this model tell when a South Asian patient is being falsely reassured by generic thresholds?

That is where the gap appeared.

The models often knew that “South Asians have higher risk.” But knowing a phrase is different from operationalizing it.

A model can say:

“South Asian ethnicity is a risk enhancer.”

and still fail to change the plan.

It can mention higher diabetes risk and still treat BMI 23.4 as basically normal. It can mention premature cardiovascular disease and still fail to ask for Lp(a) or ApoB.

This is the danger zone: the model sounds culturally aware, but the clinical decision has not changed.

The second finding. citations were the cliff

The most consistent failure was not diagnosis.

It was evidence.

And I want to spend some time here on why this matters.

Many models could produce a plausible answer about South Asian risk, but when asked to anchor that answer in literature, they fell apart. They cited generic guidelines. They gestured toward “studies.” They gave Western threshold logic in South Asian clothing.

This matters because citations are not decoration. They are the foundation everything else gets built on.

Clinical AI will not stop at answering questions. We are going to build care pathways, simulations, risk engines, population-health tools, and eventually some version of digital twins on top of these systems. If the evidence layer is weak, every layer above it compounds the weakness.

You cannot build a reliable simulation of South Asian cardiometabolic risk if the model cannot retrieve the right South Asian evidence. You cannot build a useful digital twin if the baseline map treats BMI, glucose, ApoB, Lp(a), liver fat, and family history as if they mean the same thing in every population. You cannot personalize care on top of a foundation that was never calibrated for the person in front of you.

The more ambitious the AI system becomes, the more the evidence layer matters.

The near-term harms still matter: a patient-facing app that reviews “borderline” labs and says no further testing is needed, a clinician copilot that quietly omits ApoB or Lp(a), a population-health tool that leaves lean, high-risk South Asians in the low-priority group.

But the larger loss is compounding. Weak evidence becomes weak retrieval. Weak retrieval becomes weak pathways. Weak pathways become weak simulations.

That is how blind spots harden. from missing data, to missing recommendations, to automated infrastructure.

This is why retrieval-based tools like OpenEvidence did better in some places. A system with access to better medical literature could find South Asian-specific references more reliably. But even then, the question becomes: what is in the library, what does it retrieve first, and what does it treat as authoritative?

If the corpus is Western-first, the answer will be Western-first.

Even when the patient is not.

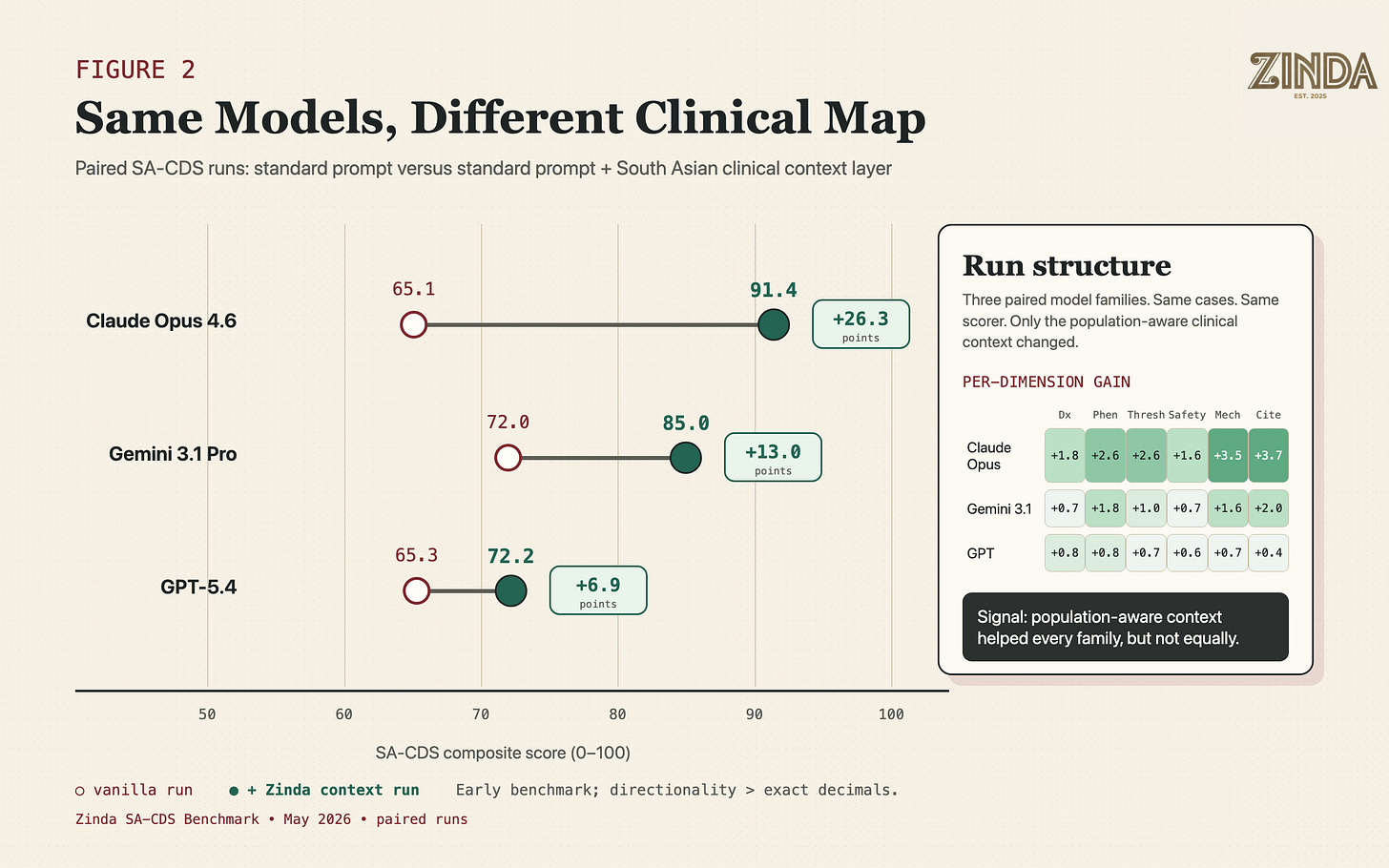

The third finding: a knowledge layer changed the result

Here is the part that surprised me. When we gave the same base model a South Asian clinical context layer, performance improved.

That context layer was not a trick prompt or a list of answers. It was built from the same translational work underneath Zinda: published case reports and case series, cohort evidence, genetics and pharmacogenomics signals, South Asian threshold differences, repeated clinical phenotypes, and the failure modes we kept seeing across the case repository.

The goal was not to tell the model what to say. It was to give the model the kind of clinical map a population-aware physician should bring into the encounter.

The same model family performed differently when the clinical context became population-aware.

Paired runs make the core signal visible: the same model family can behave very differently once the clinical map becomes population-aware.

I would not overread the exact numbers yet. This is an early benchmark. But the direction is hard to ignore.

The same model, when given the right South Asian clinical context, stopped behaving like a generic medical assistant and started behaving more like a population-aware clinical reasoning tool.

The most interesting part was not simply that knowledge helped. It was that models absorbed the same knowledge differently.

Claude improved dramatically. Gemini improved meaningfully. GPT improved.

That matters.

In the next era of medicine, everyone will talk about “the model.” But the model, as we are seeing with harness and tooling, is only one layer. The context you give it, the evidence it can retrieve, the way it weighs population-specific information, and the clinical architecture around it may matter just as much.

One uncomfortable signal (and outside the scope of piece today): a purpose-built medical AI tool did not automatically outperform general-purpose models on South Asian-specific cases. That does not mean medical AI tools are bad. It means “medical” is not a magic word.

Medical-grade is not the same as population-grade.

All I will say is a tool can be clinically fluent and still not be calibrated for us. That is the difference between knowing medicine and knowing how medicine behaves in a specific body.

Why this gets more important as AI improves

The easy version of this argument is: the models are imperfect now, so we should test them. That is true, but it is not the whole point.

The deeper issue is that the models are going to get better. And more efficient. They will have longer context windows. They will read entire charts instead of snippets. They will connect to lab systems, wearables, genetic reports, imaging, medications, and prior notes. They will use retrieval pipelines, clinical tool calls, structured guidelines, and agentic workflows.

That sounds powerful. It is powerful.

And it is exactly why I am excited about medical AI.

The right system could notice South Asian risk earlier than the current system does. It could connect post-meal symptoms, family history, body composition, ApoB, Lp(a), liver fat, sleep, stress, migration, diet, and medication response in a way no rushed clinic visit can reliably do. It could help us move from episodic medicine to continuous pattern recognition.

But power amplifies calibration.

If the underlying map is wrong, a better model does not simply make smaller mistakes. It can make more polished mistakes across a larger surface area.

A short-context chatbot might miss a South Asian risk pattern in one answer. A long-context clinical agent could miss it after reading ten years of labs, family history, medications, diet notes, sleep data, and prior consults. A tool-connected system could then turn that miss into a normalized care pathway: no ApoB, no Lp(a), no earlier intervention, no change in follow-up interval.

This is why benchmark work matters now, before these systems become invisible infrastructure. The future will not just be “which model is smartest?”

It will be:

what evidence does the model retrieve?

what context does it carry into the case?

what thresholds does it treat as meaningful?

what clinical tools is it allowed to call?

what pathway does it trigger after the answer?

and who gets counted as the reference patient?

That is where the real safety question lives.

And underneath all of those:

Who gets counted as the reference patient?

That is where the real safety question lives.

What this does not prove yet

This is by no means a publication-grade study. Yes, it is a serious internal benchmark, but it is still a pilot.

Sensationalism has no place here.

The current version tells us that South Asian-specific context improved performance on South Asian-aligned cases. It does not yet prove, in the strict academic sense, that AI performs worse on South Asian cases than on matched non-South-Asian cases.

That is the next version: matched cases, blinded clinician scoring, multi-turn conversations, external cases, ablations, and HealthBench-style weighted criteria.

That is the difference between a signal and a paper. Right now, we have a signal. It is strong enough to share. It is not strong enough to declare victory.

The last-mile map

South Asian health does not suffer from zero research. It suffers from a last-mile translation failure.

The biology is universal. The integration is South Asian. And the integration is what medicine keeps missing.

The data exists across MASALA, INTERHEART, CARRS, UK Biobank, ICMR-INDIAB, genetics papers, case reports, and clinical anecdotes. It is just scattered, under-integrated, and rarely converted into physician-facing decisions.

As I wrote in my previous post on the evolution of cartography.

For centuries, maps contained errors because everyone copied the previous map. The Island of California. The Mountains of Kong. Medicine has maps like this too: BMI 25, LDL “near optimal,” HbA1c below the line, fasting glucose not high enough, ten-year risk score low.

These maps are useful. They are just not drawn at the resolution South Asian patients need.

AI trained on those maps will reproduce them. AI connected to better maps might finally help redraw them.

The clinical error is not that the evidence does not exist. It is that it fails to arrive in time, in the right form, inside the actual decision. A cardiometabolic insight lives in one cohort, a pharmacogenomic signal in a genetics paper, a diet pattern in a case report, and a repeated phenotype in a clinician’s memory. But the patient still arrives at the last mile and hears:

“The numbers look fine.”

This is also why LLMs are unusually well-suited to the problem.

For the first time, we have tools that can read across messy case reports, cohort studies, genetics papers, guidelines, and clinical anecdotes at scale. They can extract patterns, connect mechanisms, compare thresholds, and turn scattered evidence into physician-facing logic.

Finding and organizing more than 1,500 South Asian cases used to look like institution-scale labor: a team, a budget, and months to a year of work. With cheaper compute and modern LLMs, one person can now do much of that first-pass discovery in days.

That does not replace expert review, but it does change where and when that review happens. It changes the economics of translation. It compresses the distance between buried evidence and clinical decision support, and eventually automating, automated with intelligence, parts of it.

Zinda exists to turn fragmented South Asian health evidence into a clinical operating layer: something that can help physicians, patients, and eventually AI systems reason with the right map at the moment it matters.

We are building matched cases, designing physician review, and turning the benchmark from an internal signal into something that can survive peer review. The point is not to publish every mechanism.

This is why we are building Zinda Research, bringing togeterh both population level and individual level research to complete the last mile of the South asian framework.

The point is to prove the outcome:

South Asian patients should not be falsely reassured by tools calibrated to someone else.

Because one in four human beings should not be an edge case.

If you are a clinician, researcher, or AI evaluation person interested in helping validate this benchmark, reach out.

Email me at omar@zinda.health, or reply to this post.

The future of medical AI will become population-aware only if someone builds the missing ground truth.

-Omar

Dr. Omar Saleem is a double board-certified physician dedicated to health optimization, especially within the South Asian community. He runs Zinda, A South Asian Health Initiative, building the framework for South Asian precision medicine.